You built the app fast. Maybe faster than you thought possible.

A few prompts, a few iterations, and suddenly you have something real: a patient intake tool, a workflow app, a clinical dashboard, or the early version of a product you want to launch.

In this episode of the HIPAA Insider Show, we break down what happens next when an AI-built healthcare app moves from prototype to real-world use with patient data.

Now comes the question that matters:

Can this app safely handle patient data?

That is where the easy part ends.

AI can help you build software quickly, but it does not automatically make that software ready for regulated healthcare use. Once an app creates, receives, maintains, or transmits electronic protected health information, the standard changes. At that point, the issue is no longer just whether the application works. The issue is whether the environment around it can protect ePHI with the administrative, physical, and technical safeguards required by HIPAA. HHS says exactly that in its Security Rule guidance, and NIST’s SP 800-66 Rev. 2 is the current cybersecurity resource guide for implementing those requirements in practice.

That is the real gap in healthcare AI right now: building the app is getting easier, but deploying it responsibly is still hard.

If you are at that stage already, this is where HIPAA Vault fits naturally. The challenge is no longer “how do we code this?” The challenge is “how do we move this into a production environment that supports HIPAA compliance, secure operations, and ongoing maintenance?”

→ Explore HIPAA Hosting Solutions to move into a safer production environment with the infrastructure and support healthcare workloads demand.

A working prototype is not the same as a HIPAA-ready application

This is the first thing many founders, clinicians, and operators underestimate.

A prototype proves the workflow. It shows the app can function. It may even look polished enough to demo to colleagues, partners, or early customers. But a healthcare deployment has to do much more than function. It has to control access, protect data at rest and in transit, support secure administration, and hold up over time as users, systems, and risks change. HHS explains that the HIPAA Security Rule protects ePHI through administrative, physical, and technical safeguards, and its guidance materials emphasize risk analysis and risk management as continuing obligations rather than one-time tasks.

That is why an AI-built app can feel finished and still be nowhere near ready for patient data.

- Gil says it in the episode with one of the best lines in the whole conversation:

“You have to find a home for your application.”

That line is simple, but it gets to the point faster than most technical explanations do. A development tool can help you build the app. A real healthcare environment has to help you protect what the app handles.

Accelerate Innovation with Managed Google Cloud AI

Build custom models using TensorFlow and Document AI. We handle the security and BAA, giving you total control over your results.

Learn MoreThe moment your app touches ePHI, the conversation changes

As long as you are testing with fake data in a local or sandbox environment, you are still mostly in product mode.

The moment the app is expected to handle real patient information, you move into a different category of risk.

Now the important questions are different:

– Who can access the data?

– Where is it stored?

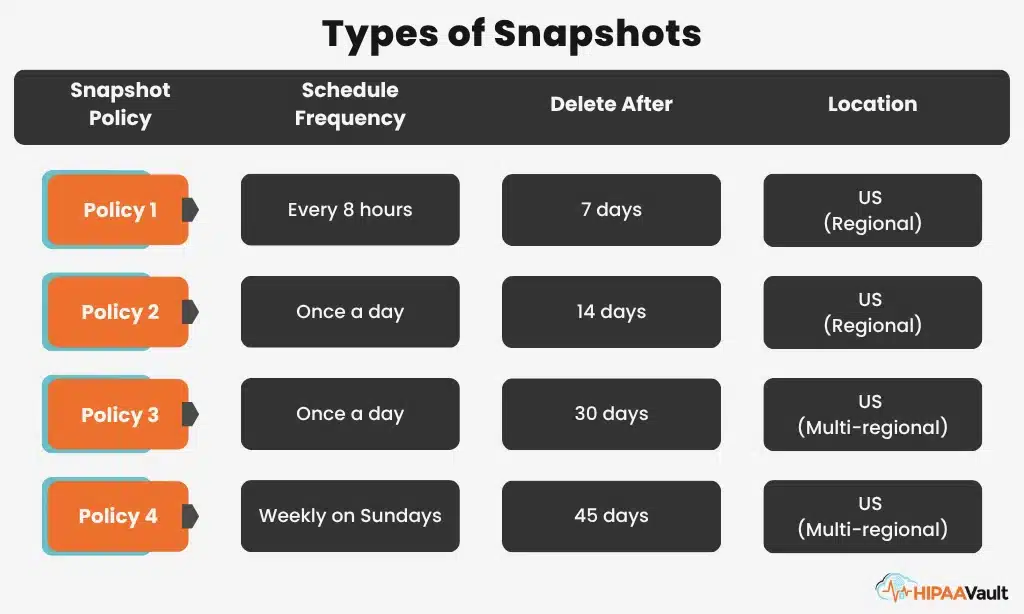

– How is it backed up?

– How is it monitored?

– What vendors are involved?

– Can those vendors sign a Business Associate Agreement?

– Who is responsible for patching, logging, scanning, and maintaining the environment?

HHS states that the Security Rule establishes national standards to protect ePHI created, received, used, or maintained by covered entities, and HHS’s cloud guidance says a covered entity or business associate may use a cloud service to store or process ePHI only if it enters into a HIPAA-compliant BAA with the cloud service provider and otherwise complies with the HIPAA Rules.

That is why “it works” is not the finish line in healthcare. It is just the start of a more serious phase.

The first real filter is not price. It is the BAA.

A lot of teams start by comparing storage, compute, or monthly cost.

That is too late in the process.

The first practical question is much simpler:

Will this provider sign a BAA for the service being used?

That question filters out bad options immediately.

HHS explains that if a covered entity engages a business associate to help carry out healthcare activities and functions, the covered entity must have a written business associate contract or other arrangement, and that business associates must protect the privacy and security of protected health information as required by the HIPAA Rules. HHS also states that cloud service providers can be used for ePHI only when a HIPAA-compliant BAA is in place.

So if a vendor will not sign a BAA, that is not a minor inconvenience. It is a stop sign.

This is where a healthcare-focused hosting provider becomes much more relevant than a generic platform.

→ Need a safer place to run your healthcare app?

Explore HIPAA Hosting Solutions for infrastructure built to support HIPAA-regulated workloads.

AI can speed up development, but it cannot secure the operating environment by itself

This is the biggest misunderstanding in the current wave of AI app building.

AI can generate forms, dashboards, authentication flows, APIs, and data structures. It can help a small team create something that would have taken months in a much shorter time. But the real-world operating environment around the app still has to be designed, configured, and maintained by people who understand healthcare risk.

That includes decisions about access control, server hardening, secure backups, monitoring, vulnerability management, patching, logging, vendor relationships, and documentation. NIST’s SP 800-66 Rev. 2 exists specifically to help regulated entities translate the HIPAA Security Rule into practical cybersecurity implementation steps, which is a reminder that compliance is operational, not magical.

In other words, AI can help you write the application. It cannot, by itself, make the whole system safe enough for real healthcare use.

What moving from prototype to production actually looks like

This is the section most readers are really looking for.

Once the app is built, the next step is not endless polishing. The next step is operational review.

Start by reviewing what the application really does. Confirm what it stores, what third-party services it connects to, how users authenticate, where uploads go, what logs are generated, and whether real data has already been mixed with test data. This lines up with HHS’s repeated emphasis on identifying risks to the confidentiality, integrity, and availability of ePHI as part of Security Rule compliance.

Then look at migration. Most AI development platforms let you export code, and many also let you export databases or related assets. That sounds simple until PHI is involved. At that point, the export itself becomes part of the risk profile, because now the question is not just where the app will live, but how sensitive data gets from one place to another.

Then comes the environment itself. This is where many teams need help. Even if the code is solid, the infrastructure still has to be configured and maintained correctly. HHS guidance and NIST’s implementation resource both point back to the same idea: safeguarding ePHI requires more than deploying software. It requires an environment and process that supports secure ongoing operations.

→ Built the app already? The next move is secure deployment.

Start with HIPAA Vault Hosting Solutions and Risk Assessment if your team needs operational support after launch.

The hidden challenge is not launch. It is maintenance.

One of the strongest quotes in the podcast should stay in the final piece:

“The HIPAA compliance world is not a one and done.”

That is more than a good line. It is the operating reality.

A healthcare application does not stay safe because it was configured well once. Dependencies age. Permissions change. Systems need patching. Vulnerabilities emerge. Backups need verification. Logs need review. HHS says its security guidance is intended to help covered entities identify and implement appropriate safeguards and comply with risk analysis requirements, while NIST’s HIPAA resource guide is built around implementation and continued security posture improvement.

In plain English, you do not finish security and walk away.

That is why many organizations do better with a managed operating model than with a do-it-yourself model, especially when the app was built quickly and the internal team is strong on product ideas but not looking to become a healthcare DevOps shop.

Shared responsibility is what separates serious healthcare deployment from wishful thinking

There is no magic vendor that makes the whole problem disappear.

A hosting provider can support the environment. A managed services team can help with patching, hardening, monitoring, and operational support. But your organization still owns the workflows, user roles, business logic, integrations, and internal use of the system.

HHS’s materials on covered entities and business associates make that structure clear: covered entities have obligations, business associates have obligations, and the arrangement between them must be formalized and aligned with the HIPAA Rules.

That means the right question is not:

Who can make this compliant for us?

The better question is:

Who can help us create the right environment and support our responsibilities in a way that reduces risk?

That is a much smarter way to frame the decision, and it is exactly where HIPAA Vault’s service mix makes sense.

The real opportunity is bigger than compliance

This is not an anti-AI article. It is the opposite.

AI is opening the door for physicians, founders, and healthcare operators to build useful software faster than ever before. That is a real advantage. It means smaller teams can create better workflow tools, test niche ideas sooner, and build around the way care is actually delivered.

But faster development only becomes real business value when the deployment path is handled correctly.

If the app never makes it safely beyond the laptop, the innovation stalls out right where the value should begin.

That is why this moment matters. Healthcare does not need less experimentation. It needs a better bridge between rapid application building and secure production use.

Adam’s closing quote says it perfectly:

“The tools are changing but the mission is the same — keeping patient data safe.”

If you built an AI health app and now want to use it in a real healthcare setting, the next move is not another round of prompt tuning. The next move is to evaluate the environment around the app.

Confirm where ePHI is involved. Review every vendor. Verify BAA readiness early. Separate test data from live data. Then move the application into an environment that can support secure healthcare operations over time. That approach is directly aligned with HHS guidance on the Security Rule, cloud services, business associates, and risk analysis, as well as NIST’s cybersecurity implementation guide for HIPAA-regulated entities.

That is where HIPAA Vault can help you move from prototype to secure production.

The tools have changed. The responsibility has not.